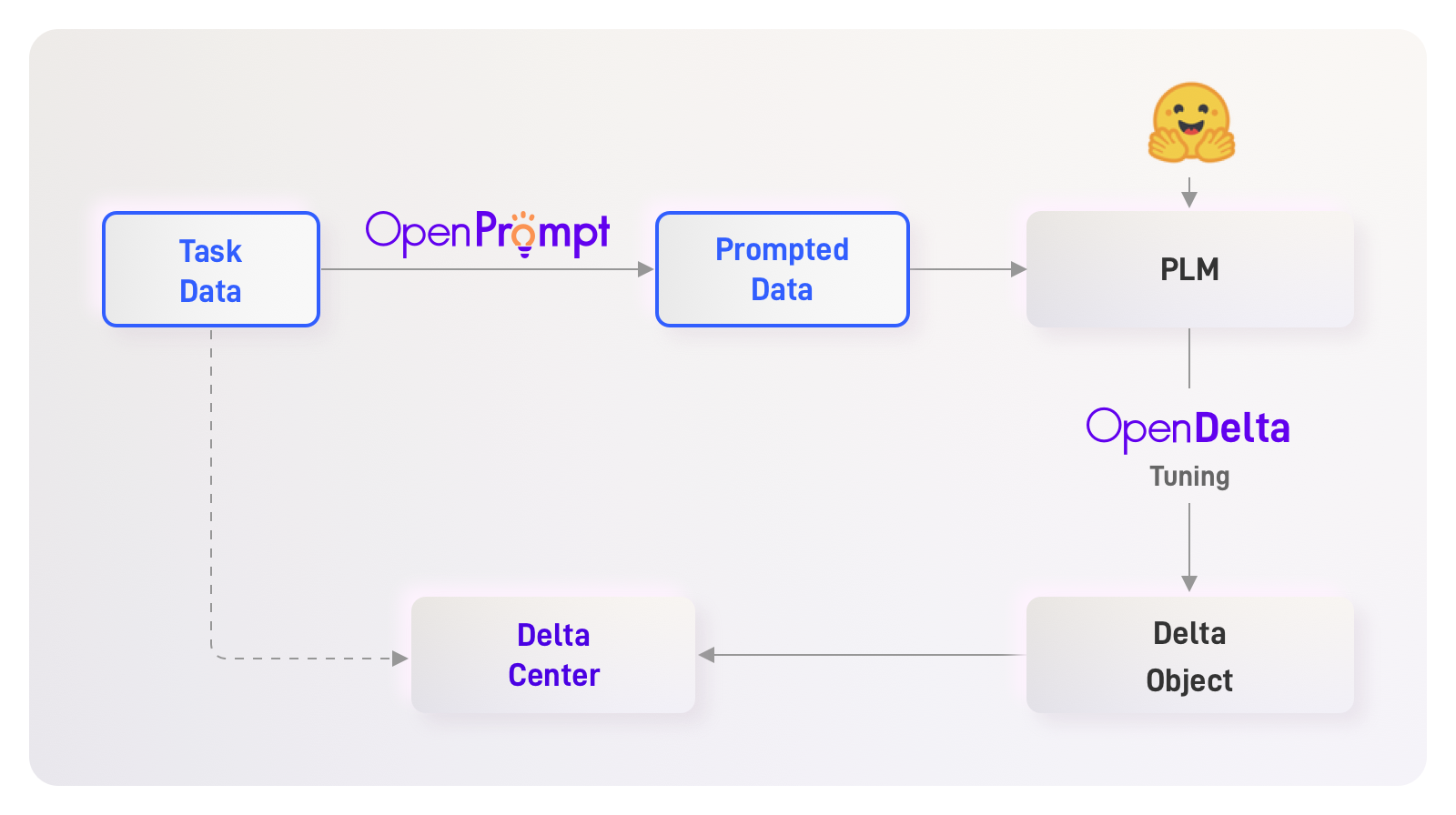

OpenDelta

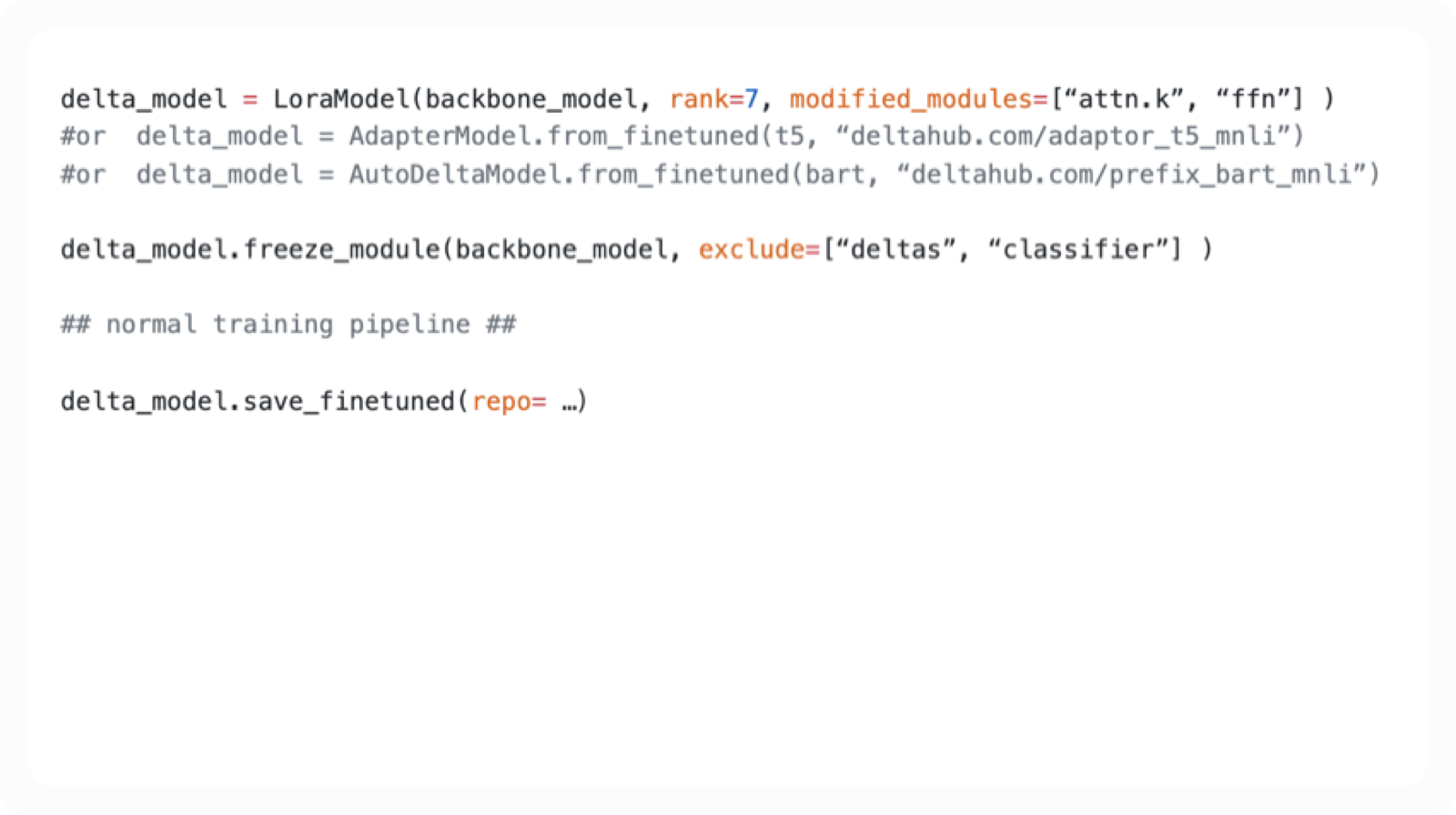

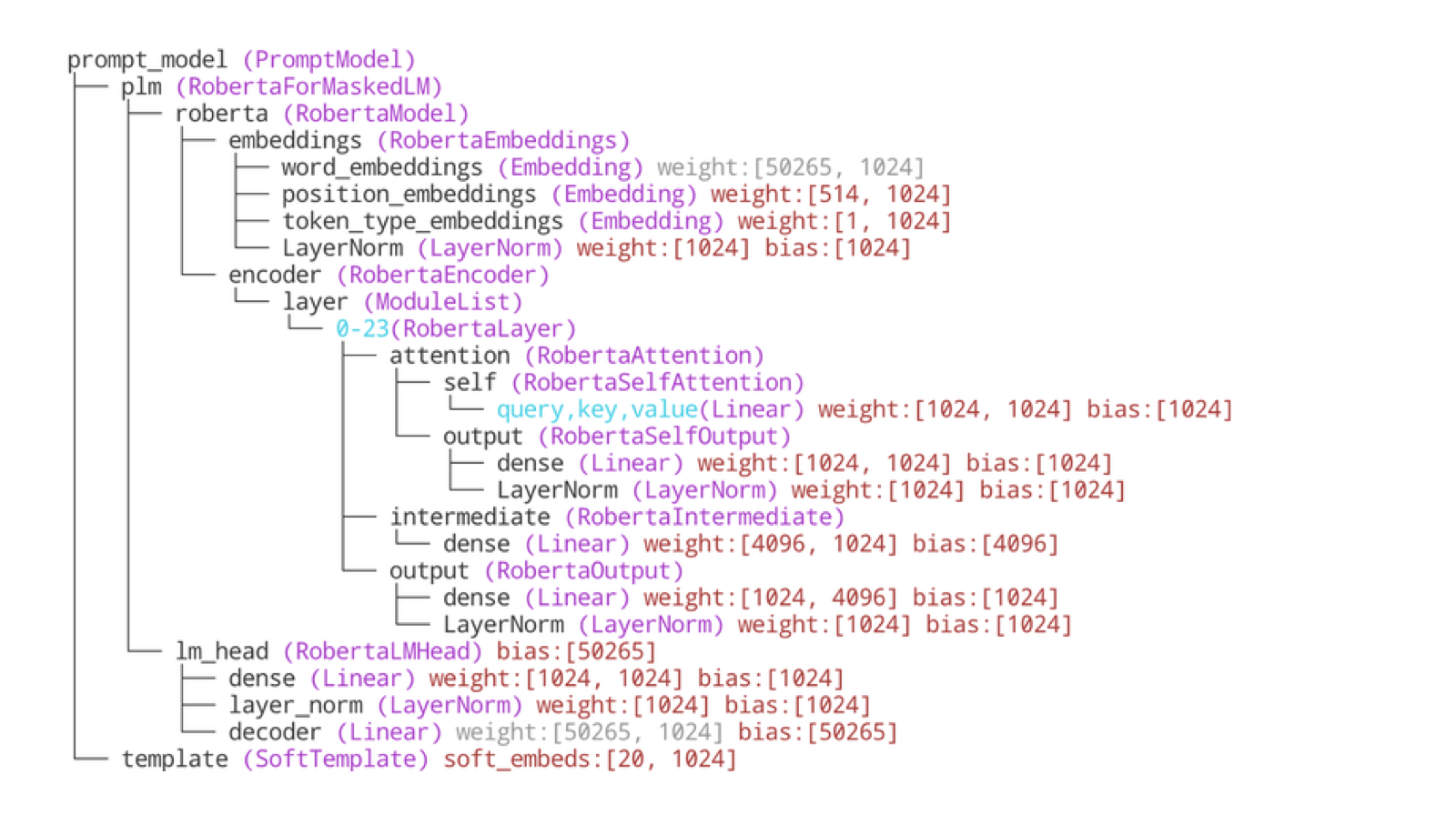

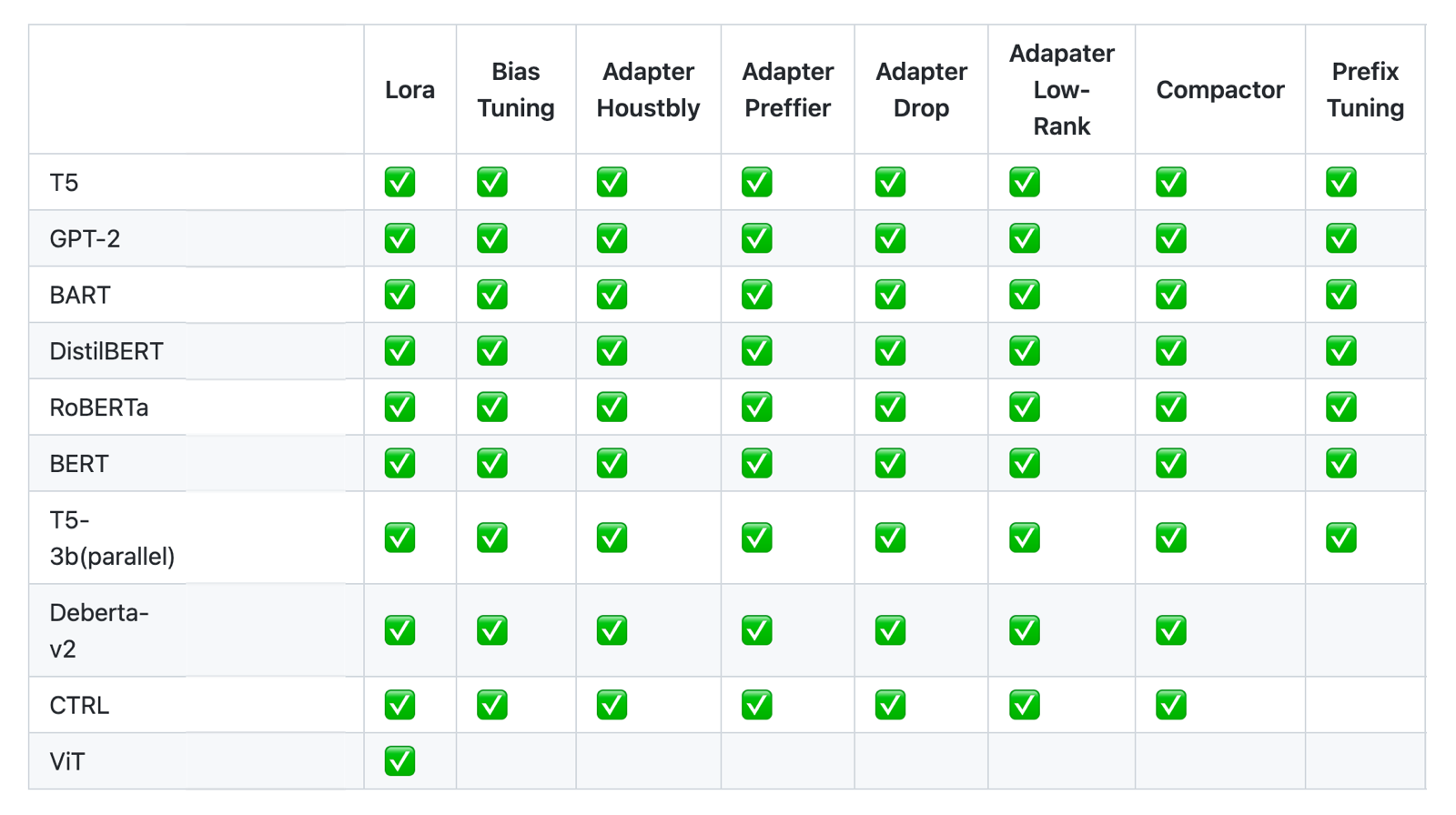

Tiny parameters leverage big models. OpenDelta performs parameter-efficient tuning for big models. By only updating very few parameters (less than 5%), the algorithms can achieve the same effect with full-parameter fine-tuning.

GitHub

GitHub Doc

Share